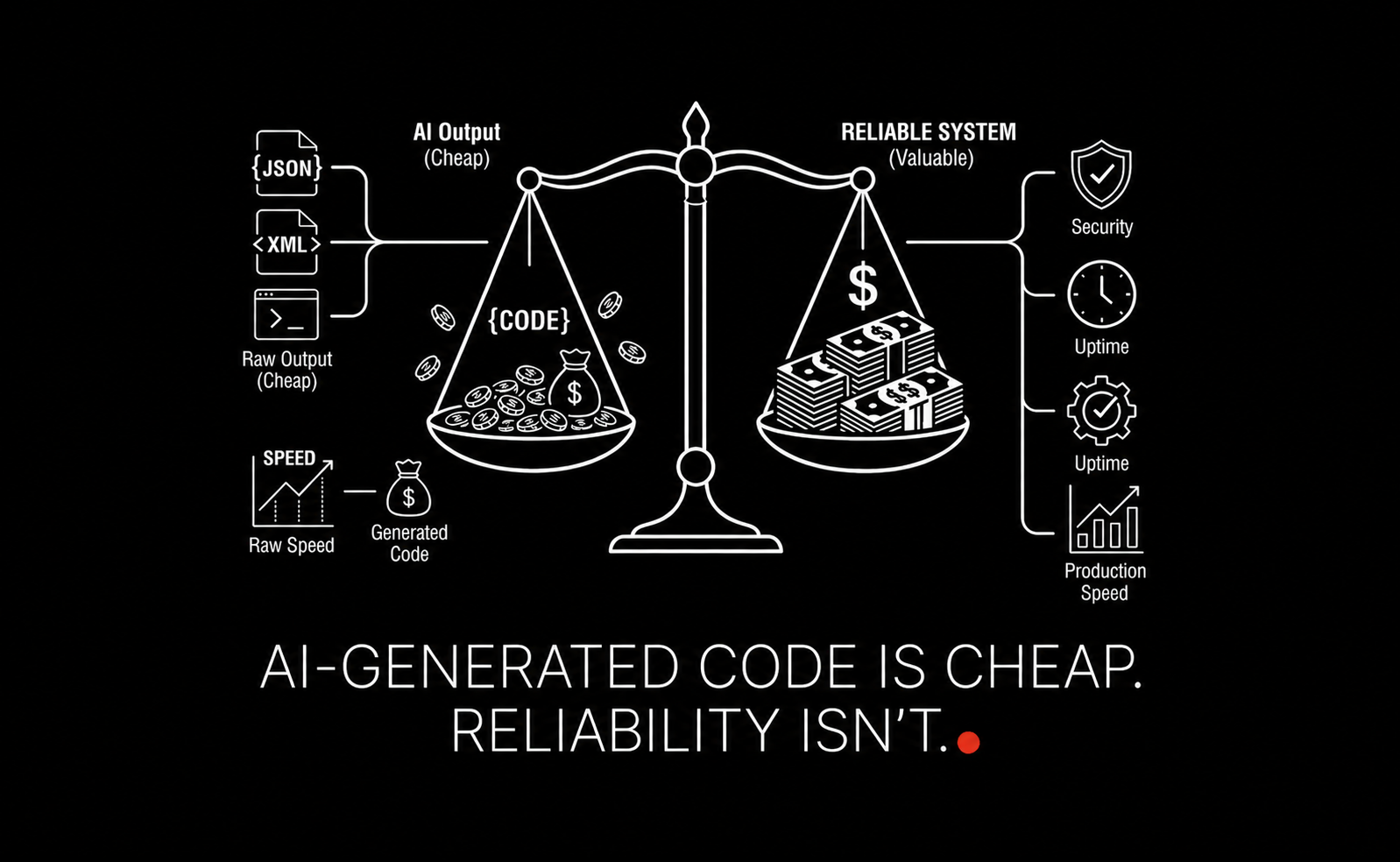

TL;DR: AI-assisted development has changed the economics of software. Writing code is faster, cheaper, and often good enough for demos. But enterprise delivery still bottlenecks on design judgment, validation, observability, security, and operational discipline. The winners in this new environment will not be the teams that generate the most code. They will be the teams that can reliably decide what should ship, how it should fail, and how it should be governed in production.

This article explains where quality breaks in AI-generated systems, how to structure delivery so speed does not create hidden risk, and what practical standards separate impressive prototypes from systems a business can trust.

At LAXIMA, our point of view is simple: AI has not removed engineering difficulty. It has relocated it. The hard part is no longer typing code. The hard part is building systems that stay correct under messy data, edge cases, organizational constraints, and production load.

If you are evaluating agentic development, it helps to understand the broader shift in the software lifecycle. We covered that in how AI is reshaping the SDLC. For teams moving from experimentation to enterprise deployment, our guide to implementing agentic AI systems for business automation is a useful companion.

What has fundamentally changed in software development with AI-assisted coding?

AI has made code generation fast and cheap, shifting the bottleneck away from writing code toward system design, validation, observability, and governance. The challenge is no longer producing code, but ensuring it behaves correctly in real-world conditions.

Why can increased development speed actually reduce overall progress?

Faster code generation often leads to more unverified complexity. Teams may produce more features, but without proper validation, review, and testing, this results in higher incident rates, more rollbacks, and hidden operational debt.

Why do AI-generated systems often fail in production?

They usually fail at boundaries: auth, data quality, retries, API variability, tenancy rules, logging gaps, and ambiguous business logic. Demos hide these issues because they optimize for happy-path success.

Why are prototypes misleading indicators of production readiness?

Prototypes focus on the “happy path” and optimize for speed and demonstration value. Production systems must handle edge cases, security, observability, compliance, and failure recovery - often representing the majority of real effort.

What is the biggest bottleneck in AI-assisted software development?

For most serious systems, the bottleneck is no longer raw implementation speed. It is judgment: design quality, review capacity, integration correctness, observability, governance, and safe rollout.

Does AI-generated code reduce software quality?

Not automatically. It can improve productivity and even quality in repetitive tasks. The risk appears when teams use it to skip architecture thinking, validation, testing, observability, or security review. AI changes where quality work is needed, it does not remove the need for it.

How should teams review AI-generated code?

Start with design review, then implementation review, then operational review, then governance review. Looking only at the diff is not enough for AI-heavy systems.

Should enterprises build custom AI infrastructure from scratch?

Usually no. Most teams should use proven components for commodity layers and reserve custom development for domain logic, policy, workflow orchestration, and differentiating user experience.

What metrics matter most for production AI systems?

Useful metrics include task success rate, change failure rate, latency, incident frequency, human handoff rate, groundedness or accuracy measures, cost per successful outcome, and auditability coverage.

What is the difference between an AI prototype and a production AI system?

A prototype proves possibility. A production system proves reliability, security, auditability, and operational control under real conditions.

How can a company tell if it is ready to scale AI automation?

If it has clear use-case ownership, evaluation standards, observability, governance controls, and rollout discipline, it is closer. If it mainly has demos and enthusiasm, it is still early. A structured readiness review often reveals the real blockers.

Why this is the real SEO angle now: AI code generation shifted the bottleneck

Search interest around AI coding tools has exploded because the visible layer is exciting: faster scaffolding, faster pull requests, faster proof-of-concepts, fewer blank screens, and much lower effort for repetitive implementation work.

But that is no longer the most valuable question.

The strategic question is this: what happens after code becomes abundant?

When code generation costs fall dramatically, four things happen at once:

Teams create more surface area than they can properly review.

Architecture mistakes spread faster because they are implemented quickly.

Operational debt accumulates earlier, often hidden behind polished demos.

Senior judgment becomes more leveraged, not less.

That is why the better search intent is not “how to generate code with AI.” It is “how to build reliable, secure, production-ready systems when AI makes code cheap.”

This is also why the conversation increasingly overlaps with workflow design, review capacity, and operating models. Even tool choice matters less than process maturity. Teams often over-focus on the assistant and under-invest in deployment discipline. If you are comparing assistants, our breakdown of agentic coding tools and their tradeoffs can help, but tools alone do not solve the real bottleneck.

The throughput trap: when more code means less progress

In traditional software teams, output metrics like tickets closed, lines changed, or pull requests merged were already imperfect. In AI-assisted teams, they become actively misleading.

A team can now produce in one week what used to take three. That sounds like a clear gain. Sometimes it is. But often, what has really increased is not validated functionality. It is unverified complexity.

Why output volume is a weak signal

Consider two teams:

Team | Weekly PRs | Median review time | Incident rate after release | Rollback frequency |

|---|---|---|---|---|

Team A | 48 | 6 hours | 7 incidents/month | 3 rollbacks/month |

Team B | 19 | 18 hours | 1 incident/month | 0-1 rollbacks/month |

If you optimize only for visible speed, Team A looks better. If you optimize for business outcomes, Team B is likely outperforming.

That gap is common in AI-assisted delivery. More code lands. More edge cases escape. More reviewers skim. More assumptions go unchallenged.

Review cost did not fall with generation cost

This is a critical asymmetry. AI dramatically lowers the cost of producing code, but the cost of understanding whether that code is correct, safe, and aligned with the system has not fallen by nearly as much.

The same dynamic appears in open source, where maintainers face a flood of low-signal AI-generated issues, patches, and security reports. As one competitor article usefully highlights, reviewer capacity remains finite even as contribution volume explodes. In enterprise environments, the “maintainer” may be a staff engineer, security lead, SRE, product owner, or compliance reviewer. The constraint is the same: review attention is scarce.

The new failure mode: local correctness, system-level wrongness

AI-generated code often looks reasonable in isolation. It compiles. Tests may pass. The style may be consistent. Yet it still fails at the system level.

Examples:

A generated integration uses a deprecated auth path that still exists in the codebase but is no longer active.

A tool call handles the happy path but ignores pagination, retries, or partial data.

A seemingly harmless refactor breaks audit logging on a regulated workflow.

A generated endpoint bypasses a tenancy boundary because context about account scoping was incomplete.

A model-generated schema mapper silently drops unknown fields, masking upstream drift until downstream logic fails.

None of these are syntax problems. They are judgment problems.

Where quality actually breaks in AI-generated systems

Most AI-generated systems do not fail in the obvious places. Boilerplate is often fine. CRUD scaffolding is usually passable. The trouble starts at boundaries, under stress, and in ambiguous requirements.

1. Integration boundaries

External systems are messy. APIs are inconsistent. Authentication states expire. Rate limits trigger unpredictably. Payloads vary. Legacy fields linger for years. The generated code usually handles the documented path. Production requires handling the real one.

Common failure points include:

Incorrect retry logic that turns transient failures into duplicated actions

Timeout values tuned for demos, not real latency distributions

Pagination bugs that work on test data but fail after page 1 in production

Weak error normalization that leaks raw upstream responses into core workflows

Schema assumptions that break when providers add optional fields or change nullability

In agentic systems, these flaws are even more dangerous because one unreliable tool degrades the whole chain.

2. Trust boundaries and authorization

Authentication and authorization are where “almost correct” is still wrong. AI assistants can generate an auth middleware or RBAC check quickly, but enterprise systems depend on precise trust decisions:

Who can access what resource?

Under which tenant or account context?

Can access be delegated?

What is visible in logs?

What happens when identity data is stale or partially missing?

A small mistake here is not a code quality issue. It is a business risk issue.

This matters especially in retrieval-heavy and memory-enabled systems. If you are building assistants over internal company data, access design cannot be bolted on later. Our article on trusted RAG systems explains why retrieval quality and permission design have to be treated as one architecture problem, not two separate features.

3. Observability gaps

Many AI-generated projects ship with logs, but not with useful observability.

There is a difference between:

having logs, and

being able to explain a production incident in 15 minutes.

For AI systems, useful observability usually needs at least five layers:

Infrastructure telemetry: latency, CPU, memory, saturation

Application telemetry: request paths, exceptions, response codes

Domain telemetry: business events, decision states, tenant identifiers

Model telemetry: prompts, tool calls, token usage, model/version metadata

Safety telemetry: policy triggers, blocked actions, fallback activations

Without this stack, incidents become archaeology.

4. Failure handling and determinism

A system that “usually works” is much worse than a system that predictably fails. The former destroys trust slowly, the latter can be designed around.

Good production AI systems need deterministic contracts wherever possible:

explicit timeout thresholds

explicit retry rules

stable error envelopes

fallback behavior with clear triggers

idempotency for repeated operations

clear handoff to humans when confidence drops

When these are missing, teams get what looks like intelligence but behaves like randomness.

5. Data and annotation debt

Competitor coverage touched on annotation workflow, and that is important. But many teams still underestimate how often “AI quality” problems are actually upstream data problems.

If labels are inconsistent, schemas unstable, or source-of-truth logic unclear, AI simply accelerates the spread of ambiguity.

Examples:

Support tickets labeled differently across regions make classifier performance appear unstable.

CRM fields with weak governance produce retrieval answers that are technically accurate but contextually wrong.

Evaluation datasets are too small or too clean, hiding real-world failure patterns.

Poor data discipline creates downstream noise that teams often misdiagnose as a model problem.

6. Hidden dead code and false affordances

One subtle but recurring problem with AI code generation is that models treat everything present in the repository as fair game. They do not know which paths are dead, deprecated, feature-flagged, or dangerous unless the context makes that explicit.

That leads to surprising defects:

Generated features built on obsolete modules

Use of utility functions that remain for backward compatibility only

Revival of old configuration pathways that no longer match current infrastructure

Assumptions based on test fixtures that no longer represent production reality

Humans often know which parts of the system are “alive.” AI does not, unless you encode that knowledge in conventions, documentation, or guardrails.

Demos are easy because they ignore the expensive parts

A prototype can be useful in a day. That is one of AI’s biggest strengths. The risk comes when organizations mistake prototype speed for production readiness.

The happy path is cheap. Operations are expensive.

What prototypes optimize for

visible progress

novelty

functional plausibility

quick user feedback

stakeholder excitement

What production systems optimize for

permission correctness

auditability

failure recovery

tenant isolation

incident response

cost control

compliance evidence

performance under load

This is why a polished demo can still be only 20% of the work.

In enterprise settings, the hidden tasks often dominate the effort:

Workstream | Prototype effort | Production effort |

|---|---|---|

Core functionality | High | Medium |

Auth and permissions | Low | High |

Observability | Low | High |

Testing and evaluation | Low | High |

Compliance and governance | None or low | High |

Rollback and resilience | None | High |

Cost controls | Low | Medium to high |

A useful rule: if a system touches regulated data, customer workflows, financial actions, or operational decisions, assume the demo represented less than half the true build effort.

The only order that works: foundations before acceleration

The most common sequencing mistake in AI-assisted delivery is starting with generation and postponing the disciplines that determine quality later.

A stronger order is:

Define business risk and success criteria

Set architecture and trust boundaries

Choose proven components

Establish data and evaluation discipline

Implement with AI assistance

Add testing, observability, and controls before rollout

Release gradually with feedback loops

This is slower for the first week and much faster over the next six months.

Step 1: define what failure means before you build

Before generating anything, answer:

What is the cost of a wrong answer or wrong action?

Is human approval required?

What data cannot leave the boundary?

What must be logged for audit?

What service-level expectation matters most: latency, accuracy, uptime, cost, or traceability?

These answers shape architecture. Without them, generated code tends to optimize the easiest visible path.

Step 2: design boundaries and invariants

Review should begin at the design layer, not the diff layer.

Teams need to agree on:

trusted versus untrusted inputs

identity propagation

state transitions

error handling rules

data retention policies

rollback mechanisms

what must never happen, even under failure

These invariants are what senior engineers protect. AI can implement them, but it will not invent them reliably.

Step 3: borrow complexity before you build it

This point showed up in the competitor material and deserves emphasis. Many organizations still build custom orchestration, custom skill systems, custom wrappers, and custom middleware before they have validated the business workflow.

That is usually backward.

Use mature components where possible for:

authentication

queueing

workflow orchestration

rate limiting

caching

tracing

policy enforcement

Custom work should focus on what is actually differentiating: business logic, domain workflows, safety policy, and user experience.

This principle also applies to coding environments. A disciplined setup in tools like Cursor or Claude Code improves consistency and reduces context drift. See our practical guide on setting up Cursor for agentic coding if your team is still treating AI coding as glorified autocomplete.

Step 4: set data, annotation, and evaluation standards early

For AI-heavy systems, evaluation should not be an afterthought.

You need:

clear label definitions

versioned datasets

golden test cases

edge-case suites

review criteria for disagreements

business-grounded metrics

Examples of useful metrics by use case:

Use case | Useful metric | Avoid relying on |

|---|---|---|

Support triage | routing accuracy, escalation precision | generic benchmark scores |

Retrieval assistant | answer groundedness, citation validity | fluency alone |

Document extraction | field-level F1, exception rate | sample success screenshots |

Agentic workflow | task completion rate, handoff rate, unsafe action rate | demo completion rate |

Step 5: use AI aggressively where toil is highest

AI is best used for:

scaffolding

routine endpoint implementation

test generation drafts

refactoring repetitive patterns

documentation first passes

schema mapping boilerplate

internal tool generation

This is where it creates leverage. The mistake is using it to bypass deliberate thinking about design and risk.

Step 6: instrument before launch, not after incidents

At minimum, production-bound AI systems should ship with:

structured logs

request tracing

error classification

latency histograms

usage and cost tracking

model and prompt version tracking

business KPI dashboards

alerts for degraded tool success or abnormal fallback rates

If you cannot answer “what happened, to whom, under which version, and why” after an incident, you are not ready to scale.

Step 7: roll out in slices

Do not go from internal demo to organization-wide deployment in one jump.

Safer rollout patterns include:

shadow mode against real traffic

internal-only pilot

single department launch

read-only mode before action-taking mode

approval-gated actions before autonomous actions

feature flags with per-tenant controls

Progressive rollout is one of the easiest ways to preserve AI speed while lowering enterprise risk.

Open source vs custom: where to build and where to buy time

One of the most expensive mistakes in enterprise AI is rebuilding commodity layers because a team wants control before it has clarity.

Control is valuable. Premature reinvention is not.

What usually should be borrowed

identity and access frameworks

observability stacks

workflow engines

queue and event infrastructure

evaluation harnesses

vector databases and retrieval frameworks

basic agent orchestration patterns

What often deserves customization

domain-specific tool contracts

policy and approval logic

business workflow decomposition

retrieval ranking tuned to your content

cost-control rules tied to usage patterns

internal reviewer and escalation pathways

A simple decision rule:

If the component is mainly... | Bias toward |

|---|---|

infrastructure plumbing | proven external component |

commodity integration | framework or SDK |

business differentiation | custom implementation |

regulatory interpretation | custom policy layer |

user-specific workflow logic | custom orchestration |

The practical principle is the same one we use with clients: borrow complexity, own accountability.

The maturity gap: senior engineers plus AI vs junior engineers plus AI

AI does not flatten engineering ability as much as people hoped. In many cases, it magnifies it.

Why? Because the bottleneck has shifted upward from syntax production to system judgment.

What stronger engineers do with AI

use it to remove mechanical toil

challenge generated assumptions

spot boundary risks early

write better evaluation cases

improve architecture throughput without weakening controls

What weaker usage patterns look like

accepting generated code because it “looks right”

creating abstractions faster than they can be reasoned about

mistaking passing tests for design correctness

shipping code without understanding failure modes

flooding reviewers with low-context changes

This is not an argument against junior engineers. It is an argument for better operating discipline. Teams need explicit review standards, stronger templates, and clearer architecture constraints so AI helps people grow instead of amplifying weak habits.

The review model has to change

Traditional code review centered heavily on the diff. In AI-assisted systems, that is no longer enough.

The new review stack should include four layers:

1. Design review

Before implementation, review:

system boundaries

trust model

state transitions

fallbacks

approval requirements

logging requirements

2. Implementation review

Then inspect:

correctness of critical paths

dependency choices

error handling

test adequacy

adherence to conventions

3. Operational review

Confirm:

monitoring exists

alerts exist

runbooks exist

rate limits and quotas are defined

rollback is possible

4. Governance review

Validate:

data handling rules

audit requirements

retention policies

human-in-the-loop obligations

change-management approvals

Most organizations still review AI systems as if they were ordinary web features. That underestimates the risk profile.

Observability for AI systems: what “good” actually looks like

Many teams know they need observability. Fewer know what good looks like for AI applications specifically.

A minimum observability blueprint

Correlation IDs: every user request, tool call, and downstream action tied together

Version metadata: model, prompt, retrieval config, policy version, code release

Decision logs: why a route, action, or fallback occurred

Safety events: blocked actions, threshold triggers, escalation to human review

Cost metrics: token usage by workflow, user, tenant, and tool chain

Quality metrics: hallucination reports, user corrections, re-run frequency, abandonment rate

Useful dashboards by audience

Audience | Needs to see |

|---|---|

Engineering | latency, failure classes, retries, tool errors |

Product | completion rate, satisfaction, handoff rate, adoption |

Operations | queue depth, SLAs, incident trends, workload shifts |

Security/compliance | access anomalies, policy triggers, audit trails |

Finance | cost per workflow, cost per successful outcome, model mix |

If your AI system is important enough to run, it is important enough to observe from multiple perspectives.

The missing section many articles ignore: cost reliability is part of system reliability

One topic often missed in discussions about production AI is that unreliable cost behavior is itself a production problem.

An application can be functionally correct and still operationally broken if its unit economics swing wildly under load.

Common cost failure patterns

tool loops that trigger repeated model calls

retrieval pipelines fetching far more context than needed

using premium models for low-risk or low-complexity tasks

lack of caching on stable lookups

multi-agent fan-out without budget controls

no per-tenant or per-user quotas

Example:

A support assistant handling 20,000 monthly requests may look cheap at pilot scale. If average cost is $0.04 per request, monthly model spend is about $800. But if poor prompt discipline, repeated retries, and unnecessary context expansion push that to $0.28 per request, the same workload now costs $5,600 before you count engineering and infrastructure. Add tool calls and premium model routing, and costs can exceed business value surprisingly fast.

How to manage cost reliability

route tasks by complexity

set token budgets per workflow

cache deterministic sub-results

cap recursive planning depth

monitor cost per successful outcome, not just total spend

define fail-open vs fail-closed behavior when budgets are exceeded

This is one reason model selection should be deliberate. If you need help comparing tradeoffs across quality, speed, and price, LAXIMA’s LLM Picker is a practical starting point.

The second missing section: evaluation should be continuous, not a pre-launch checkbox

Many teams do some benchmarking before launch and stop there. That is not enough because AI systems drift through:

model updates

prompt changes

retrieval corpus changes

user behavior changes

upstream API changes

policy tuning

Evaluation has to be continuous.

What a living evaluation program includes

golden datasets refreshed monthly or quarterly

production sampling for manual review

regression suites for critical workflows

adversarial tests for prompt injection or unsafe action paths

business metric checks tied to release gates

post-incident test case creation so failures are not repeated

Strong teams treat incidents as input to evaluation design. Every escape should produce a new test or control.

The third missing section: governance must be operational, not just policy language

Organizations often claim to have AI governance when what they really have is a document. Real governance lives in operational controls.

Operational governance means:

approval thresholds coded into workflows

role-based permissions on prompts, tools, and data

audit logs that map actions to users and system versions

documented model usage policies by use case

change management for prompts, tools, and policies

clear ownership for incidents and exceptions

If no one can answer who approved a behavior, who owns a failure mode, or how a prompt changed last week, the system is not governed in any meaningful sense.

For companies not sure whether they have the organizational foundation to support this, an AI readiness assessment is often more useful than another prototype.

A practical scorecard for production readiness

Before rollout, ask these 12 questions. If you cannot answer most of them confidently, the system is still a pilot.

Are business success metrics defined beyond demo quality?

Are trust boundaries and permission rules documented?

Do we know the cost of a wrong answer or wrong action?

Do we have a golden evaluation set for the core workflow?

Can we trace a user request across prompts, tools, and actions?

Do we log decisions and fallbacks in a debuggable way?

Is rollback possible without a high-risk manual scramble?

Do we have rate limits, budgets, and quota controls?

Can we explain how the system behaves under partial failure?

Is there a human escalation path for low-confidence or high-risk cases?

Do we know which components are commodity versus differentiating?

Has the system been rolled out gradually under real conditions?

Score interpretation:

Score | Meaning |

|---|---|

10-12 yes | Strong candidate for scaled production rollout |

7-9 yes | Promising, but likely needs targeted hardening |

4-6 yes | Pilot stage, significant operational risk remains |

0-3 yes | Demo or experiment, not a production system |

Case pattern: the most common enterprise AI rebuild

Across industries, we keep seeing a familiar sequence.

A team proves a concept quickly using AI-assisted coding.

The demo succeeds because it follows the happy path.

Stakeholders expand scope before architecture hardening is done.

Production complexity appears: permissions, retries, data quality, audit needs, human review.

The original implementation starts bending under requirements it was never designed for.

The team either rebuilds key layers or adds increasingly fragile patches.

The rebuild is not proof that AI failed. It is proof that the organization confused speed of assembly with completeness of system design.

When done correctly, AI compresses the mechanical work inside a sound architecture. When done poorly, it just accelerates the creation of redesign debt.

What leaders should measure instead of output volume

Executives and managers need metrics that reflect durable progress.

Better indicators for AI-assisted engineering teams

lead time from approved design to stable release

change failure rate

mean time to detect and resolve incidents

percentage of workflows with end-to-end tracing

cost per successful task completion

manual review hours per release

escape rate of critical defects

user trust indicators: override rate, rework rate, escalation rate

These are not as flashy as “PR volume increased 300%,” but they align with business reality.

What teams should do next: a practical operating model

If you want the upside of AI-generated code without the downside of invisible risk, use this operating model:

For engineering leaders

shift review earlier to design and boundary decisions

raise standards on auth, data access, and core business logic

fund observability as part of delivery, not platform overhead

ban vanity metrics as proof of progress

For individual engineers

use AI for drafts, not authority

verify assumptions against the real system, not only the generated diff

run local checks, test edge cases, and inspect failure paths

treat “looks plausible” as the start of review, not the end

For product and operations teams

define where human handoff is mandatory

agree on acceptable failure rates by workflow

track trust and correction behavior from real users

phase rollout by risk and reversibility

For executives

expect faster implementation but not magically lower operational rigor

insist on controls proportionate to business impact

separate prototype budgets from production budgets

measure reliability and ROI together

The LAXIMA point of view

The market has spent the last two years celebrating that AI can generate code. That part is now table stakes.

The next advantage will come from teams that can generate code and preserve clarity, control, and trust at scale.

That means:

design before generation

evaluation before claims

observability before scale

governance in the workflow, not only in the handbook

selective customization instead of infrastructure reinvention

In short: AI lowers the cost of producing software artifacts. It does not lower the cost of being wrong in production.

That is why judgment is becoming the highest-leverage engineering input. And that is why the best teams will not be the ones that write the most code with AI. They will be the ones that know what to trust, what to verify, what to instrument, and what not to ship.