Recent reporting on ChatGPT ad delivery has made one thing clear: AI-native advertising platforms are building tightly coupled loops between ad selection, click tracking, merchant-side events, and optimization. That matters far beyond media teams. It affects analytics, privacy, RevOps, ecommerce ops, and the systems architecture behind growth.

This guide explains how AI in advertising actually works, where operations teams should focus first, and how to build a scalable, governable foundation before complexity outruns control.

What AI in advertising actually means

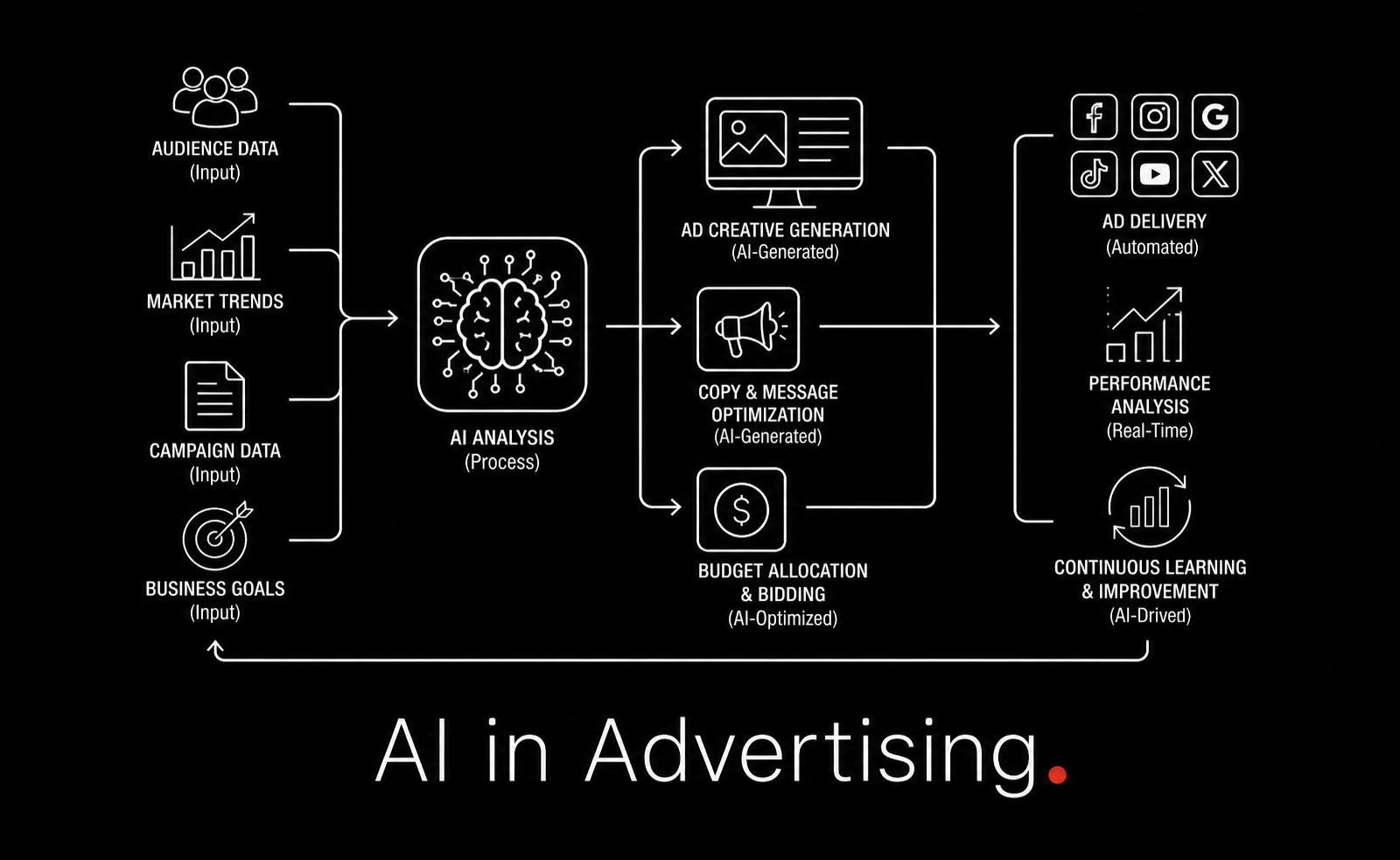

At a high level, AI in advertising is the use of machine learning, language models, automation, and predictive systems to improve how ads are targeted, created, delivered, measured, and optimized.

That definition is broad. For operators, a more useful framing is this:

Inputs: audience data, content context, campaign rules, budgets, creative variants, conversion events

Decision layer: models determine who should see what, where, when, and at what bid or priority

Feedback loop: clicks, views, purchases, downstream events, and retention data feed back into optimization

Controls: privacy, governance, approvals, observability, and exception handling keep the system safe

Traditional ad tech already used algorithmic optimization. What is different now is the breadth of AI involvement. Systems can generate copy, adapt creative in real time, classify intent from text, orchestrate channel-level budget changes, and increasingly act through agent-like workflows.

If your team already works on workflow automation, this should feel familiar. Advertising is becoming another business process that needs structured orchestration, not just channel expertise. That is the same shift we see across broader AI automation initiatives.

How AI ad systems work end to end

The highest-value operational insight from modern AI advertising is that performance does not come from one model. It comes from a closed loop.

1. Ad selection

The platform first decides whether to show an ad and which advertiser or creative unit to serve. Inputs may include:

Current user intent

Conversation or page context

Historical engagement patterns

Advertiser bid constraints

Inventory availability

Brand safety and policy filters

In AI-native surfaces such as conversational interfaces, contextual intent is especially important. In the observed ChatGPT examples, different conversation topics triggered different advertisers, suggesting that immediate session context is a primary signal source.

2. Delivery and presentation

Once selected, the ad is assembled and delivered in a format appropriate to the interface: card, carousel, sponsored recommendation, inline unit, or another structured placement. In AI interfaces, this is increasingly not a static banner. It is a structured object embedded into an interactive response stream.

That matters operationally because placement logic is now tied to product and frontend architecture. If you are working with adaptive AI interfaces, the same design principles described in Generative UI start to apply to advertising surfaces too.

3. Click and referral instrumentation

When a user clicks, the platform typically attaches tracking parameters or encrypted tokens that preserve context about the ad, request, and attribution chain. This is where operations teams often lose visibility, because the user leaves the platform and enters merchant-controlled systems.

The Buchodi analysis of ChatGPT ads shows a notably structured handoff: ad units include multiple tokens, some carried in the click URL, some retained server-side, and merchant-side scripts later report events back to OpenAI. Whether or not every platform copies this exact design, the pattern is important: AI ad attribution increasingly relies on multi-step identity and event continuity.

4. Merchant-side measurement

After the click, the destination site or app collects events such as:

Landing page view

Product detail view

Add to cart

Checkout initiation

Purchase

Lead form completion

Those events are sent to the advertiser’s systems, the ad platform’s systems, or both. The quality of this implementation determines whether optimization can happen on useful outcomes or only on shallow proxies like clicks.

5. Optimization

With enough event feedback, the system adjusts bids, placements, creative combinations, and audience priorities. This is where AI creates leverage. But it only works if the upstream data is reliable and timely.

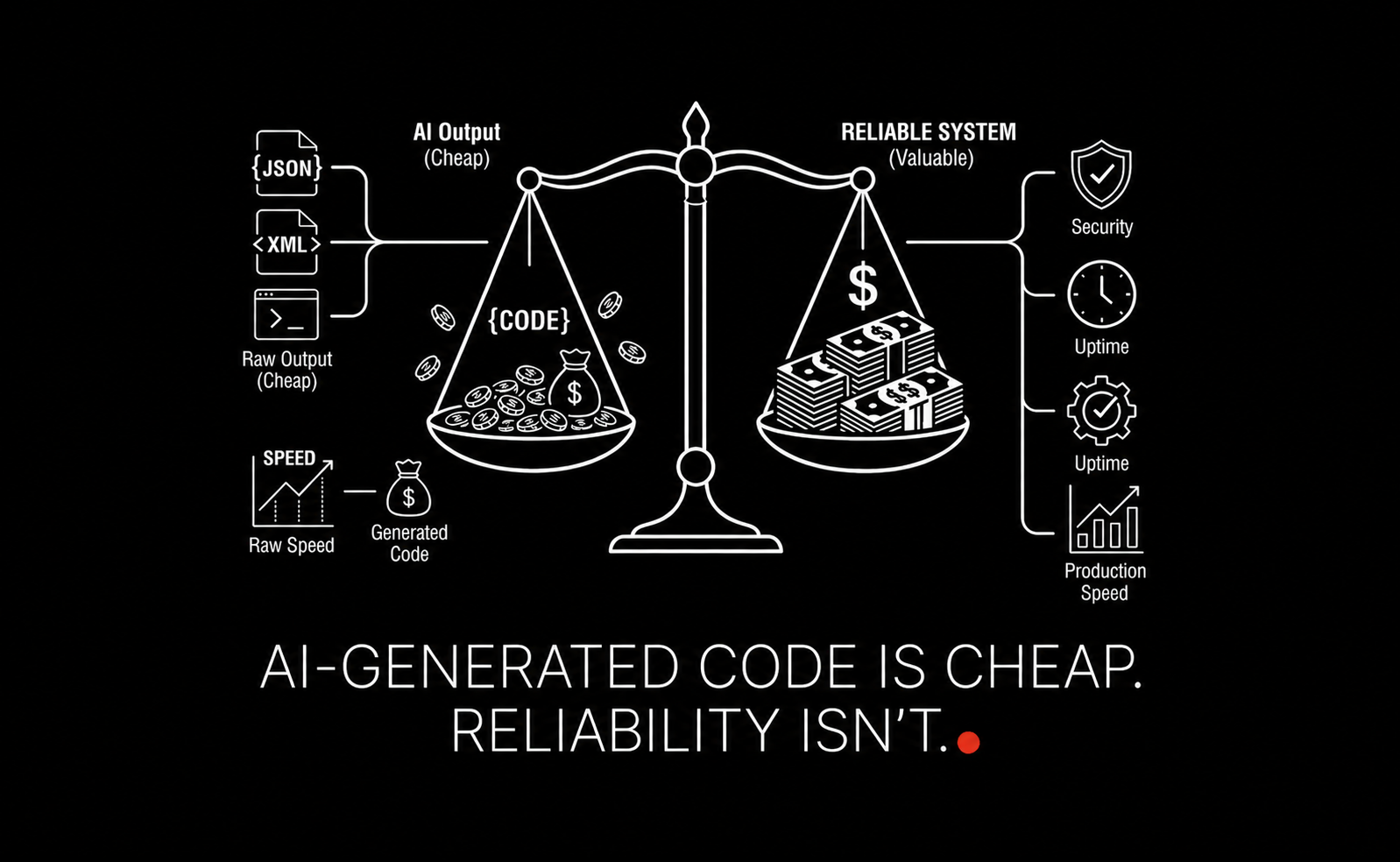

That is why LAXIMA’s view is simple: in AI advertising, model sophistication is overrated relative to event integrity. Most performance problems are operations problems in disguise.

What the ChatGPT ad attribution loop tells operators

The Buchodi research is valuable not because it confirms one platform’s implementation details forever, but because it reveals what AI-native ad systems are optimizing for:

Contextual ad matching: ads appear tied to user intent in-session

Structured ad objects: ads are machine-readable units, not just visual placements

Tokenized attribution: multiple identifiers preserve integrity across impression, click, and merchant events

Merchant SDK measurement: post-click behavior is folded back into optimization

Closed-loop visibility: the platform seeks to observe more of the customer journey, not less

For operations teams, the lesson is not “copy this exact stack.” It is “prepare for tighter platform-to-merchant telemetry coupling.” If your organization still treats campaign measurement as UTM tags plus a dashboard export, you are behind the architecture curve.

Teams should evaluate whether their data foundation can support:

First-party event capture

Consistent campaign and creative IDs

Deduplicated conversions

Cross-system identity resolution where legally permitted

Near-real-time feedback into optimization systems

If that is not in place, your paid media performance ceiling may be lower than your spend suggests.

The biggest use cases for AI in advertising

Most articles cover the standard list: targeting, personalization, creative testing, optimization, and fraud detection. Those are real. But operators need to know where each use case changes workflow design.

Targeting and segmentation

AI helps identify high-propensity segments using behavioral, contextual, transactional, and sometimes intent-derived signals. Instead of broad buckets like “mid-market buyers,” models can identify narrower groups such as:

Visitors who viewed pricing twice in 7 days but did not request a demo

Shoppers with high basket value but low checkout completion

Users whose query patterns indicate urgent purchase intent

Operational requirement: segment definitions must be standardized across analytics, CRM, CDP, and ad platforms. If every system uses different logic, “AI targeting” becomes a reporting illusion.

Personalization

AI can tailor messaging, offers, landing pages, and recommended products. Personalization can improve CTR and conversion rate, but only when constrained by strong content operations.

For example, a retailer might run 200 ad variants across 12 audience slices. Without approval workflows, brand controls, and content labeling, that quickly becomes unmanageable.

Operational requirement: build a creative taxonomy. Every asset should have metadata for audience, offer, stage, compliance status, and expiration date.

Automated budget allocation

AI systems can shift spend between campaigns, geographies, placements, devices, and dayparts based on performance signals. This is one of the most practical benefits because it directly affects efficiency.

Example:

Campaign A CPA: $42

Campaign B CPA: $68

Campaign C CPA: $39 but limited by impression share

An AI-driven budget layer may move 15-20% of spend from B into C during peak conversion windows, then reevaluate hourly.

Operational requirement: guardrails matter. Define floor and ceiling budgets, pacing thresholds, approved reallocation ranges, and human review triggers.

Dynamic creative optimization

DCO systems combine headlines, descriptions, images, CTAs, and offers into different combinations based on audience and context. This can outperform manually built variants, especially at scale.

Operational requirement: measure at the component level, not just the ad level. Otherwise you do not know whether the lift came from the offer, the image, or the audience.

Performance analysis and forecasting

AI can surface anomalies, likely winners, seasonality effects, and underperforming combinations faster than analysts working manually. The real gain is cycle time.

If your current optimization cadence is weekly, AI can move it toward daily or intra-day.

Operational requirement: exception routing. Someone must own what happens when the model flags a problem.

Fraud detection and traffic quality

AI can identify suspicious click patterns, unusual conversion clusters, and low-quality inventory. This is especially important when more spend is automated.

Operational requirement: don’t let fraud controls live only inside vendors. Keep independent analytics and reconciliation logic wherever possible.

Where operations teams should focus first

Many companies start in the wrong place: with AI-generated ad copy. That is easy to trial, but rarely the bottleneck.

A better sequence is:

Fix measurement

Standardize campaign data

Automate repetitive optimization tasks

Add controlled personalization

Expand into predictive and agentic workflows

This mirrors what we see across enterprise AI programs more broadly. Teams that skip data discipline usually hit a ceiling fast. If you need a broader blueprint for building reliable systems first, see The Executive’s Guide to RAG and AI-Generated Code Is Cheap. Reliability Isn’t.

A practical maturity model

Stage | What it looks like | Main risk | Next step |

|---|---|---|---|

Manual | Channel teams optimize by hand using dashboards and exports | Slow reaction time | Unify event tracking |

Automated | Rules manage bids, pacing, alerts, and reporting | Rules conflict across systems | Create central governance |

AI-assisted | Models recommend targeting, creative, and budget shifts | Recommendations lack trust | Improve explainability and QA |

Closed-loop AI | Conversion data feeds directly into optimization with guardrails | Data integrity failures scale faster | Add observability and audits |

Agentic | Systems propose, test, reallocate, and report with human oversight | Loss of control and accountability | Formalize approvals and rollback paths |

Governance, privacy, and compliance are now operational concerns

Every competitor article mentions trust or transparency. Few explain what that means in practice.

For operations, governance in AI advertising should answer five questions:

What data is the system allowed to use?

What decisions can it make automatically?

What requires review?

How is performance audited?

How are exceptions handled?

That becomes critical as platforms collect more post-click data and advertisers push for deeper attribution.

Minimum governance checklist

Documented approved data sources

Consent-aware event collection

Retention limits for ad-related identifiers

Named owners for campaign automation rules

Bias and audience exclusion reviews

Creative approval workflows

Monitoring for drift and anomalous spend

Rollback procedure for model or rules failures

If your organization is early in this journey, start with an AI readiness assessment. Advertising teams often adopt automation faster than governance catches up.

The missing piece most articles ignore: observability

This is one of the most important sections competitors missed.

When AI is involved in ad operations, you need observability, not just reporting. Reporting tells you what happened. Observability helps you diagnose why.

Track at least four layers:

Business metrics

ROAS

CPA

Conversion rate

Revenue per visit

Customer acquisition cost by cohort

Operational metrics

Event latency

Campaign sync failures

Creative approval cycle time

Budget pacing variance

Deduplication rate across conversions

Model and decision metrics

Recommendation acceptance rate

Prediction confidence bands

Drift in audience scoring

False-positive fraud flags

Governance metrics

Percentage of spend under automated control

Number of manual overrides

Policy violations caught pre-launch

Campaigns missing consent-compliant tracking

This is where ad ops starts looking more like AI systems engineering. If your team is moving toward more autonomous workflows, the concepts in agentic AI systems for business automation are directly relevant.

The second missing piece: a clean operating model

Another gap in most AI advertising content is organizational design. Tools do not fail in isolation. They fail in messy handoffs.

A practical operating model usually needs four owners:

Marketing or media: strategy, spend goals, channel execution

Operations: workflow design, campaign taxonomy, SLAs, exception management

Data/analytics: attribution logic, event quality, experimentation, forecasting

Legal/privacy: consent, usage policy, regional compliance

If no single function owns the workflow across those boundaries, you get fragmented automation: lots of tools, little control.

Recommended RACI for AI advertising changes

Activity | Marketing | Operations | Data | Legal/Privacy |

|---|---|---|---|---|

Launch new AI bidding workflow | A | R | C | C |

Approve data sources for targeting | C | C | R | A |

Define attribution model | C | C | A/R | C |

Approve dynamic creative templates | A/R | C | C | C |

Respond to spend anomaly | C | A/R | R | I |

How to implement AI in advertising without creating chaos

Phase 1: instrument the funnel

Start with reliable first-party event capture. You need consistent identifiers for campaigns, creatives, sessions, and conversions. Eliminate duplicate event firing before adding more automation.

Phase 2: standardize taxonomy

Create naming conventions for campaigns, audiences, offers, and creative assets. If creative labels are inconsistent, optimization insights will be noisy.

Phase 3: automate low-risk tasks

Good early candidates include:

Budget pacing alerts

Underperformance detection

Creative expiration checks

Audience overlap detection

Report generation

These are high-value, low-drama uses of AI and workflow automation. They fit naturally alongside broader workflow optimization efforts.

Phase 4: deploy controlled optimization

Enable AI-assisted recommendations or bounded auto-reallocation. Set explicit constraints such as:

No more than 10% daily budget shift without approval

No new audience activation without review

No creative deployment without compliant metadata

Phase 5: move to closed-loop learning

Once attribution is trusted, feed downstream events back into optimization. Prioritize business outcomes, not vanity metrics. A click model is easy. A profit-aware model is useful.

Decision criteria for choosing AI advertising tools

When evaluating vendors or platform features, operations teams should ask:

Measurement: Can we export raw event data? How is deduplication handled?

Control: What decisions are fully automated vs configurable?

Transparency: Can we understand why spend shifted or an audience was prioritized?

Integration: Does it connect cleanly to CRM, analytics, CDP, and ecommerce systems?

Governance: Are there approval layers, audit logs, and role-based controls?

Latency: How quickly do conversion signals influence optimization?

Reliability: What happens when data feeds fail?

Those questions sound basic, but they are what separates a usable system from a demo.

FAQ

What is the biggest benefit of AI in advertising?

For operators, the biggest benefit is not just better targeting. It is faster optimization at scale. AI reduces manual analysis and can improve budget efficiency, creative testing, and response time to performance changes.

Is AI in advertising mainly about ad generation?

No. Creative generation is the visible part, but the larger impact is in targeting, delivery, attribution, forecasting, and workflow automation.

What is the biggest risk?

Poor measurement. If event data is wrong, AI systems optimize toward the wrong outcome faster than humans would.

Do operations teams need to care about ad attribution architecture?

Yes. As platforms use richer tokenized and SDK-based attribution loops, operations teams need to understand how data moves across systems, where trust breaks, and what can be audited.

How should companies start?

Start with event quality, taxonomy, and guardrails. Then automate repetitive decisions. Do not jump straight to full autonomy.

Final takeaway

AI in advertising is maturing from a campaign enhancement into an operating system for demand generation. The underlying pattern is clear across both mainstream industry guidance and emerging AI-native platforms: selection, delivery, measurement, and optimization are becoming more tightly integrated.

The companies that benefit most will not be the ones with the most AI features switched on. They will be the ones with the cleanest data, clearest controls, and strongest workflow design.

That is the LAXIMA point of view: treat AI advertising as an operations architecture problem first. Once the loop is trustworthy, performance follows.